Chapter 13 Preference Data and Reward Signals¶

After a large model has completed Supervised Fine-Tuning (SFT), many teams enter a new stage: the model has essentially acquired task-execution capability — it can understand instructions, produce structured answers, mimic a business tone, and give responses that "look reasonable" in most common scenarios. But as soon as the model is actually placed into a production business environment, another, thornier problem quickly surfaces: although the model can answer, it does not necessarily make, in a stable way, the kind of choices the organization wants.

The word "choice" here does not refer to the low-level sampling behavior of which token the model picks from the search space; rather, it refers to which style it leans toward, which value ordering it follows, which goals it prioritizes, and how it trades off when goals conflict — among many "basically correct" expressive paths. A model that has not undergone preference alignment may not be factually wrong, but it can display problems such as verbosity, sycophancy, excessive conservatism, ambiguity, overconfidence, over-templated answers, loose risk boundaries, or inconsistent business positioning. It is not unusable, but it cannot be used in a stable, scalable, and worry-free way.

Precisely for this reason, preference data and reward signals occupy an independent position in the model lifecycle. Their job is not to teach the model "what knowledge exists in the world," nor to teach the model "what the standard answer to a task is," but to further specify: when multiple candidate behaviors are all plausible, which ones deserve to be encouraged, which must be suppressed, which are acceptable in ordinary scenarios but disallowed in high-risk ones, and which may please users yet violate business norms. In other words, preference learning is not about adding knowledge — it is about shaping value ordering.

For teams responsible for data design, annotation specifications, training interfaces, and launch governance, this means preference data cannot be treated as a simple extension of SFT data. SFT specifies more "what the model will do," while preference data specifies "in what manner the model does these things, whom it prioritizes, how it handles conflicts, and when it should stop." The former is mainly about usability; the latter further addresses controllability, consistency, and organizational fit. Many teams, after finishing SFT, feel the model is "good enough"; more mature teams realize that the preference stage is the key engineering link where abstract business requirements, risk boundaries, and brand style are translated into training signals.

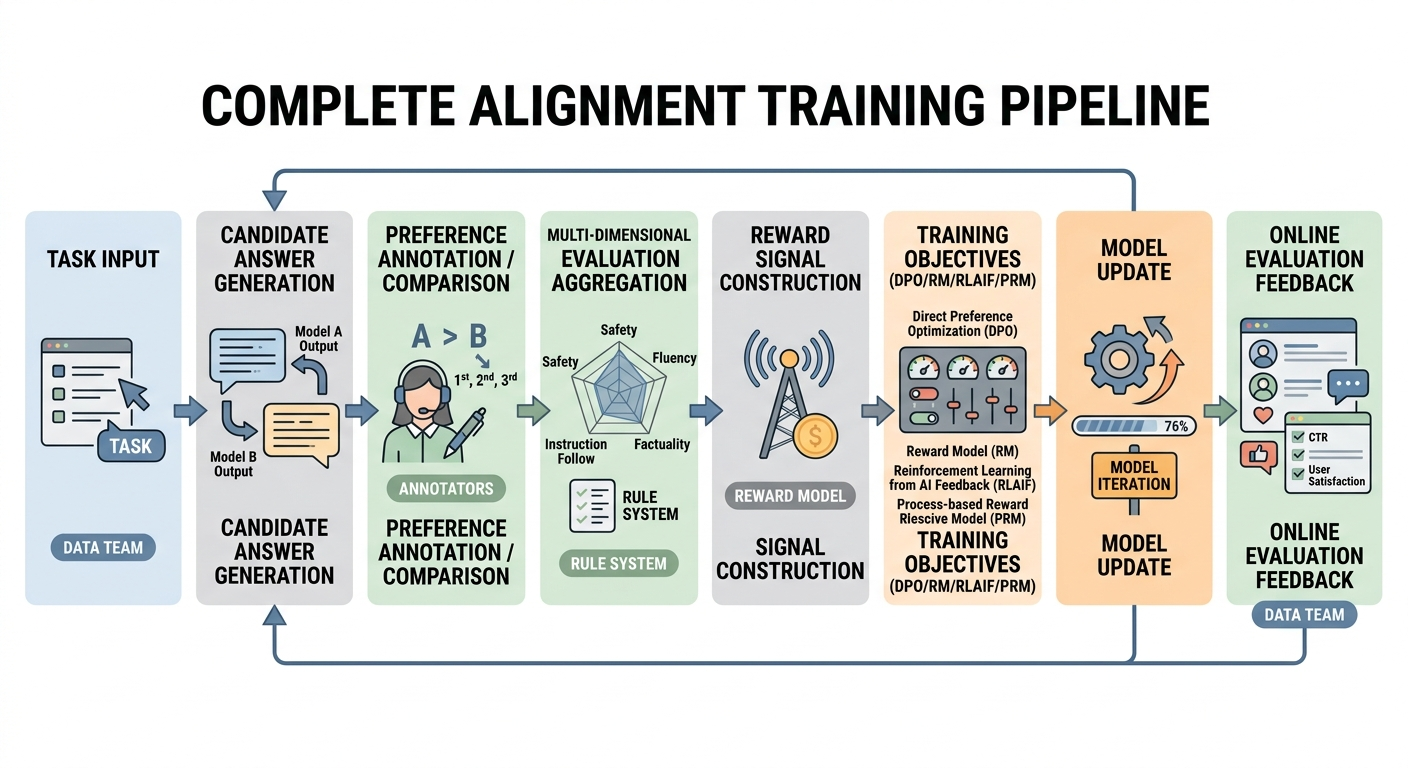

This chapter is aimed at team members who need to take preference learning from concept to concrete data construction and training interfaces. It systematically discusses the role of preference data, the relationship between preference pairs and reward models, the design of process rewards, the aggregation and Pareto trade-offs of multi-objective preferences, preference sources and supervision modes, noise and consistency governance, and how this data maps onto training methods such as DPO, RM, RLAIF, and PRM. We particularly emphasize one core view: preference learning is not "doing one more round of human feedback" — it is explicitly building an organization-level behavior-ordering system; reward signals are not just an evaluation tacked on during training, but the key interface that formally converts this ordering system into a learnable target for the model.

13.1 The Role of Preference Data¶

Why preference data determines a model's behavioral style¶

If SFT tells the model "how it should answer," then preference data actually determines "when multiple answerable options exist, which kind of answer the model leans toward." Many teams initially underestimate this, because on the surface, SFT samples also have style, wording, and business rules — it seems that as long as the reference answer is written well enough, the model will naturally learn the desired behavior. But reality is not so simple.

The essence of SFT is imitating a single target output. It encourages the model to approximate the reference answer given an input, thereby forming a distributional preference for "what looks like a correct answer." However, many real-world scenarios have no unique answer. For the same question, there could simultaneously exist a more concise answer, a more complete answer, a more cautious answer, a more encouraging answer, or one that emphasizes boundaries more. They can all be self-consistent, but the organization may not treat them equally. What really determines behavioral style is not whether the model can produce these different types of answers, but which way it tends to go among them.

Behavioral style is rarely decided directly by a single template, tone word, or rule — it gradually crystallizes as a statistical result of many local choices. A model perceived by users as "professional" is usually not so because it constantly says "from a professional perspective"; it is because, in many boundary cases, it tends to retain uncertainty, explain rationale, and state limitations. A model perceived as "helpful" is not so because it mechanically piles on information, but because it more often prioritizes the key steps needed to complete the task rather than background filler. Style, in other words, is a ranking outcome rather than just a surface feature.

From a data-construction perspective, preference data shapes behavioral style through at least four layers. First, it defines the comparison dimensions. Whether the team requires prioritizing truthfulness, helpfulness, politeness, executability, compliance, or conciseness — once these dimensions enter preference judgments, the model will gradually respond to them. Second, it defines the priorities among these dimensions. In high-risk tasks, correctness and boundary statements must outweigh naturalness and fluency; in marketing scenarios, brand consistency may matter more than open-ended creativity. Third, it defines how trade-offs are made under conflict — when two candidate outputs each have merits and weaknesses, which should win. Finally, it defines the range of variation the organization can accept: which stylistic differences are merely optional, and which represent unstable behaviors that must be eliminated.

In a sense, preference data is what turns abstract "behavioral style" into training-visible facts of local comparison. Only when such comparisons are constructed systematically and at scale does the model bake "what counts as the kind of answer we want" into a stable bias in its parameters. Without preference data, a model can only approximate within the imitation space of SFT; with preference data, it begins to form explicit choice tendencies. Preference data is therefore not a decorative module in the training pipeline — it is the core interface for style shaping.

Looking further, preference data matters because it turns "what the organization wants the model to become" from an abstract slogan into an executable supervisory structure. Many teams say they want the model to be "professional, trustworthy, natural, robust, and deployable," but if these requirements are not broken down into candidate comparisons and annotation rules, they stay at the level of meeting-room language and never enter parameter updates. The model cannot see slogans; it can only see local win/lose relations in training samples. Who wins, why they win, and under what scenarios they win — these directly shape model behavior far more than macro-level visions.

How preference data converts abstract values into training signals¶

What preference learning actually solves is not simply collecting more "user-liked" samples — it is converting originally vague value judgments into a form of supervision that can enter training. For an organization, many requirements sound reasonable, such as "be cautious," "be polite," "have boundaries," "help users complete tasks," but these only become training signals when they land on comparable candidate outputs in concrete samples.

For example, when a team says "the model should be more robust," that itself is not a directly trainable label. Only when, for the same input, candidate A gives an affirmative judgment while candidate B explicitly states insufficient evidence, limiting conditions, and suggested scope, and the annotation system consistently judges B as superior — only then is "robustness" translated into a ranking bias the parameters can perceive. Likewise, "the model should be more helpful to users" does not simply mean writing more; it means using many comparisons to teach the model when to prioritize steps, when to prioritize conclusions, and when to first frame the problem before explaining.

In this sense, the core value of preference data is not just "filtering out better answers," but making the organization's implicit value function explicit. It forces the team to answer a series of questions previously evaded: when correctness conflicts with naturalness, who wins; when safety boundaries conflict with user experience, who wins; when completeness conflicts with conciseness, who wins. Preference learning does not invent these conflicts — it forces the system to confront them and pin them down in data structures.

Why behavioral-style stability depends on the preference distribution¶

The reason a model's behavioral style becomes stable is not because some rule is memorized, but because a large number of preference samples form a statistically consistent supervisory distribution. Style is not determined by a single sample but by an aggregate tendency repeatedly reinforced by a population of samples.

This is rather different from knowledge learning. With factual questions, you either remember a fact or you don't. Stylistic behavior is more like being gradually pushed in some direction within a fuzzy space. A model does not automatically transfer the habit "in high-risk scenarios, state limitations first" to all related tasks just because it saw one such sentence; what it really learns is which forms of expression, answer structures, and tonal choices more frequently receive positive feedback. Style, in short, is formed not by memorizing rules but by repeatedly experiencing similar trade-offs.

Suppose a team wants the model to always state limitations before giving advice in high-risk scenarios. If such samples appear only sporadically in the training set, the model will likely reproduce this behavior only on a few similar inputs and fail to generalize it into a stable style. Only when this preference is consistently repeated across different topics, expressions, and difficulty levels does the model learn it as a deeper behavioral inclination. Conversely, if half of the training samples reward cautious expression while the other half reward emphatic affirmation, what the model ultimately learns is not a clear style but an unstable mixed policy.

This instability often does not manifest in obvious ways. It is not necessarily blatant errors; more commonly, it looks like: sometimes cautious, sometimes assertive; sometimes stating boundaries first, sometimes jumping straight to judgment; sometimes restrained in tone, sometimes suddenly overconfident. Any single answer may be defensible, but viewed together they reveal that the model lacks a unified behavioral center of gravity. For real products, this "sometimes right, sometimes decent, but overall unstable" state is often harder to handle than outright content errors — because it erodes user expectations about the system's overall behavior, not just one isolated point.

The preference distribution also matters because style is usually multi-dimensional rather than single. Caution, conciseness, politeness, clear structure, evidence orientation, no over-promising — these may look like independent requirements, but they interact during training. An answer might be preferred for being "more complete," but does that completeness reinforce helpfulness or encourage verbosity? An answer might be chosen for being "more decisive," but does that decisiveness reinforce clarity or indulge overconfidence? An answer might win for being "warmer," but does that warmth improve experience or loosen boundaries? When the preference distribution is poorly designed, what the model ends up learning may not be the style the team wants, but an ambiguous mishmash of mutually conflicting habits.

The biggest danger for preference samples is therefore not insufficient quantity but inconsistent direction. Many samples with unstable direction usually teach the model only "to randomly pick a phrasing in different contexts"; not very many samples but with consistent direction more easily produce a stable behavioral tendency. Many teams have an illusion that as long as they write a few more liked answers and run a few more rounds of comparisons, the style will emerge. What's really critical is not "writing more," but "whether the same orientation is stably repeated across different samples." Without that, the model only sees scattered preferences, not a generalizable style pattern.

Take high-risk responses: an effective preference distribution usually does not just demand caution on a handful of canonical dangerous questions — it extends that orientation across a whole swath of adjacent scenarios. In legal questions with insufficient information, retain conditions; in medical questions with unclear symptoms, triage first; in financial advice with weak evidence, avoid overconfidence; in enterprise policy Q&A with ambiguous authority, state applicable scope first. On the surface these are different tasks, but underneath they jointly reinforce one thing: when information is insufficient or risk is elevated, the model should not rush to produce a seemingly complete conclusion, but prioritize stating boundaries, premises, and limitations. Only when such cross-topic, cross-expression, cross-task repetition is abundant does the model gradually learn "stabilize boundaries first" as a default behavior rather than an exception in a few question types.

Conversely, if different data sources within a team do not unify this preference, problems quickly arise. If the safety team favors conservative phrasing while the operations team prefers "concrete and direct" answers, and the product team worries that over-conservatism hurts user experience — then different batches will reinforce different orientations. The result is not balance but oscillation. Sometimes it acts like a safety assistant, sometimes like a marketing copywriter, sometimes like a Q&A bot afraid of liability. Each persona shows up a little, but no stable center exists.

That is why "distribution design" in preference learning is so crucial. The team must care not only whether each individual preference label is correct, but also whether the whole batch is statistically consistent, whether it covers key conflict scenarios, and whether the dimensions the organization truly cares about are present at sufficient sample proportions. Behavioral style is fundamentally a distributional consequence, not an aggregation of clever sentences.

Going further, the preference distribution shapes not only "what the model likes to say" but also "what it defaults to caring about first." Some distributions push the model to give conclusions first; others push it to explain reasoning first. Some make it more willing to supply information; others make it more willing to admit uncertainty. Some encourage proactive reassurance and elaboration; others reinforce conciseness and restraint. The style that finally emerges is not produced by who phrases things most eloquently in one training round — it is the cumulative tendency built up across many similar choices. Once that tendency is in place, it shows up across new questions, new phrasings, and new scenarios as the consistency of "the same system speaking."

So in preference data construction, what must really be guarded against is not the occasional mislabel but the silent drift of the overall distribution. As long as the distribution is scattered, contradictory, or self-conflicting, no clear style will form; as long as it is stable, persistent, and reproducible across scenarios, the model is pushed toward becoming a behaviorally coherent system — even if individual samples are not particularly "brilliant." Style stability, in the end, is not taught by a single sentence — it is pressed out gradually by an entire sample distribution.

Why preference alignment is still needed after SFT¶

A common misconception is: as long as SFT data is rich enough and the answers high enough in quality, preference alignment is not necessary. This sounds reasonable because high-quality SFT data really does substantially improve helpfulness and formatting consistency. However, from the nature of the training objective, SFT still cannot replace preference alignment, because the two answer different questions.

SFT mainly answers "what the model should learn to produce." Through teacher signals, it maps a set of inputs to a set of approved reference outputs, helping the model master task structure, domain knowledge, format templates, and semantic patterns. Preference alignment addresses another level: when multiple candidate outputs all pass SFT's "looks like the reference" standard, which one should the model prefer? This difference is especially obvious in open-ended generation. SFT can teach the model to answer; it usually cannot systematically suppress those "also reasonable-looking, but organizationally non-preferred" answer types.

For example, in many Q&A and assistant scenarios, an SFT-only model often exhibits "over-helpfulness": it tries to fill every answer, stuffing in everything it can think of, with the result that key suggestions are drowned in long preambles. Or, the model may learn a habit of "feigning certainty," because in many training samples, fluent, confident expression looks more like a complete reference answer — yet in production, this style brings factual and compliance risks. Or, SFT can teach the model to be polite in general, but cannot stably teach it to switch to a clearly bounded, standardized mode when a high-risk request appears.

The fundamental reason is that SFT is essentially point-to-point imitation, while preference alignment is inter-candidate ranking. The former excels at building task capability; the latter excels at shaping value order. Many issues hard to express in SFT — such as "this answer is not wrong but more verbose than another," "this answer is comprehensive but its boundary statements are weak," "this answer is natural but feels like an over-promise" — are better expressed through preference comparisons, because they are not absolute right/wrong but relative better/worse.

Moreover, SFT struggles to stably express objective conflicts. Real business is not single-dimensional optimization; multiple objectives coexist: helpfulness vs. safety, completeness vs. latency, explanation vs. conciseness, personalization vs. standardization, friendliness vs. accountability boundaries. SFT samples can implicitly contain these trade-offs, but cannot usually shape them into a stable, measurable, traceable structure across the dataset. Preference alignment, by contrast, makes these conflicts explicit: annotators compare multiple candidate outputs for the same input and provide reasoning, allowing the team to see its real value ordering.

So preference alignment is still needed after SFT — not because SFT was done poorly, but because SFT is inherently not responsible for ranking. A mature training pipeline does not treat preference learning as patching SFT; it treats it as the next layer of behavior shaping that inevitably follows. SFT solves "can the model do it"; preference alignment solves "what does the model tend to do" — and in deployable systems, the latter often directly determines user experience and business controllability.

SFT is good at teaching capability; preference alignment is good at deciding trade-offs¶

If we layer the training process functionally, SFT is more like building the basic skill library, while preference alignment is like building the scheduling principles. SFT gives the model abilities — answering questions, summarizing, drawing on knowledge, following formats, imitating domain expression. Preference alignment further specifies, when all these abilities exist, which combination the model should prefer.

The biggest difference between the two is not just "one teaches content, the other teaches style." It is where they act. SFT is more like "putting tools into the toolbox." Through many supervised samples, the model gradually learns what task shapes exist, what common output forms there are, which expressions are compliant, and which structures the system can accept. It resolves "can it" — whether the model has formed a basic capability interface. Once these capabilities exist, real systems quickly face a different class of issue: in this scenario, which response should the model lean toward? What should it protect first, what should it concede; where should it expand, where should it cut; where should it be decisive, where should it preserve conditions? That level of decision is not automatically settled by SFT.

For example, a model can write long answers or short ones; it can give strong recommendations or be cautiously reserved; it can be procedural or companionable. SFT makes it "all able," but does not automatically determine "which it picks more often." Real production systems usually fear not that the model can't, but that it can but chooses wrong. That is why preference alignment functions in engineering more like a "policy layer" than a "knowledge layer."

Such "can-but-chooses-wrong" issues are often not visible in offline tests, because many offline evals more easily reveal whether the model hit the point, covered key information, or followed the format, but cannot fully expose its tendencies when multiple feasible answers exist. A response may have no obvious content error and meet basic requirements, yet it may be overly long — turning what could be one sentence into three paragraphs; it may jump too quickly to an affirmation, skipping the premises that should have been stated; it may be overly cheerful, sounding flippant in high-risk or serious-tone contexts. These are not capability gaps but priority-ordering biases.

From this angle, SFT answers "what actions the model should master," while preference alignment answers "among these actions, which should be the default." That is why the same base model, after being shaped by different preference data, can produce systems with very different temperaments. Some are clearly restrained; some are more proactive; some give conclusions first, some state conditions first; some lean toward warm, service-oriented companionship, others toward calm, terse professionalism. The capability gap is often not huge — the differences mostly come from "what is chosen by default."

This matters greatly in engineering, because "is this good to use" in a product is often determined not by capability ceiling but by default trade-offs. An enterprise customer-service assistant that always answers simple questions at length, or always gives background before steps, will quickly feel inefficient. A medical assistant that, faced with incomplete symptom descriptions, always tries to complete a full answer instead of triaging risk and asking key questions, is unsafe no matter how much medical knowledge it has. A legal assistant that, when documents are insufficient, can't help but trend toward "conclusion-like" answers, remains unsteady even if it can cite statutes. The problems are not "the model doesn't know" — they are "the model's defaults are off."

That is also why many teams experience the illusion that the model performs decently in offline capability tests but, once launched, still feels verbose, unstable, overly warm, overly conservative, or blurry on boundaries. The cause is usually not capability shortage but inadequately shaped trade-off mechanisms. Preference learning intervenes exactly at this step.

What preference alignment really handles is often not "can it be done" but "among multiple feasible options, which deserves to be repeatedly chosen." Faced with user follow-ups, the model can keep elaborating or first summarize concisely; faced with uncertain information, it can try to fill in or clearly state limits; faced with emotional users, it can lean toward reassurance or toward procedural progress. SFT gives the model these possibilities; preference alignment, through many comparison signals, tells it: in our product context, which is preferable, and which — though tenable — should not be the default.

Further, preference alignment is not just "tuning the model to look nicer"; it gives the model a stable decision center of gravity. Without that center, the model easily wobbles across different inputs, contexts, and phrasings. Today it's concise, tomorrow it spreads out; this time it's cautious, next time it rushes to conclusions; one turn is professional, the next becomes overly familiar. Users may not articulate where the problem lies, but they will clearly feel that the system has no fixed temperament. What preference alignment must solve is pressing that wobble into a predictable behavioral orientation.

So if SFT mainly broadens "what the model can do," preference alignment tightens "what the model usually does." The former addresses the capability base; the latter addresses policy consistency. Without SFT, many actions cannot even be learned; without preference alignment, the model knows a bit of everything but rarely behaves like the same system over time. Deployable models usually shine not because of one stupendous capability but because they combine a sufficiently broad capability surface with clear, stable, scenario-appropriate defaults.

From a team collaboration perspective, this division of labor is also valuable. The SFT stage is better suited to addressing task coverage, formatting, domain expression, and basic capability interfaces. The preference stage is better suited to handling the balance between conciseness and completeness, proactivity and restraint, efficiency and reassurance, certainty and reservation. If the two are not separated, teams end up dumping all problems onto SFT, hoping it can both teach everything and incidentally decide all behavioral preferences. The result is messier samples, blurrier training objectives, and a model that neither solidifies capability nor stabilizes trade-offs.

That is also why deciding whether a problem belongs to the SFT stage or the preference stage depends on whether it is "can't do" or "can but keeps choosing wrong." The former is a capability issue; the latter is a policy issue. Once these are cleanly separated, the post-training pipeline acquires layers: first give the model enough robust capability interfaces, then organize those capabilities through preference alignment into a behavioral order aligned with product goals. At that point, the model is no longer just "able to answer" — it begins to "answer in the way you want."

Overlap and conflict between human preference and business preference¶

In preference-learning discussions, "human preference" is often treated as a naturally legitimate target, as if collecting which answer humans like is enough to make the model better. But for enterprise-grade or task-oriented systems, what really needs modeling is rarely a single pure "human preference" — it is the superposition of multiple preference sources: ordinary users' interaction preferences, experts' professional judgments, business units' organizational goals, the floor requirements of risk and compliance, product design's expectations of experience pacing, and brand teams' demand for consistent phrasing and tone. They overlap significantly, but are by no means identical.

The overlap is usually intuitive. Users, business owners, and risk teams all generally want the model's answers to be correct, clear, non-fabricated, and problem-solving. These form the layer where preference learning most easily reaches consensus, and they are the source of universal dimensions like helpfulness, truthfulness, and intelligibility. But once we drill into finer behavioral ordering, conflicts become obvious.

User preference often tilts toward natural, timely, low-restriction, low-refusal, human-like, warmer-toned answers — even those that "decide for me." Business preference often demands clearer boundaries, more restrained commitments, more auditable information, more uniform expression. In some product scenarios, users may prefer the model to give clear recommendations even on weak evidence; the business side requires the model to expose uncertainty and forbid strong claims on thin evidence. In other scenarios, users prefer "friend-like" conversation; enterprise services require formality, robustness, and accountability. The former emphasizes closeness; the latter emphasizes institutional boundaries.

This means preference data cannot rest on the crude assumption "let annotators pick the answer they like by intuition." Preferences must be decomposed into components that can be discussed, quantified, and reviewed. Otherwise, so-called "preference collection" only mixes annotator habits, short-term experience, and surface style. A truly mature preference system places "what humans like," "what the organization permits," and "what the business optimizes" into the same diagram, and explicitly handles their overlaps and conflicts.

It is here that Pareto trade-offs become very important. For multi-objective systems, the goal is usually not a single optimum but a set of acceptable solutions that strike reasonable balances across objectives. For example, one answer may be very polite and readable but weak on boundary statements; another may be very robust but feel mechanical. The business doesn't abstractly say which is "better" — it judges by scenario: for this task class, we are willing to sacrifice some naturalness for higher safety and consistency; for another class, we permit the model greater expressive freedom. Preference data turns this strategy from verbal discussion into trainable ranking evidence.

Why "users like it" cannot directly equal "the system should learn it"¶

In lightweight discussions, preference learning is often simplified to "collect which answer users like more." But in real system design, this is often false. A user liking an answer at the moment may be because it sounds smoother, is more decisive, more human-like, or quicker — or merely because it flatters the user's existing stance. What the system needs is not "instant satisfaction" but "long-term usable, controllable, auditable" behavior.

The two sometimes align and sometimes don't. In health, legal, financial, education, or enterprise-policy scenarios, users may prefer more confident answers, but the system must reward exposing uncertainty, indicating applicability boundaries, and recommending further verification. Users may prefer experiences with fewer refusals, fewer reminders, fewer disclaimers, but for high-risk requests, the organization must require the model to retain sufficiently strong boundary control. User experience is one source of preference; it is not the entirety of preference definition.

Therefore, a mature preference system must first distinguish "experience preference," "professional preference," "compliance preference," "brand preference," and "business preference," and then decide how they aggregate. Otherwise, "user-centric" can degrade into "short-term subjective-satisfaction-centric," which actually harms long-term system stability.

How business preference enters annotation specifications¶

If business preferences do not enter annotation specifications, they can hardly enter training. Many organizations, when discussing preference learning, leave business requirements at the principle level: "more on-brand," "more like a professional consultant," "robust but not mechanical." These are important, but must be further decomposed into operational judgment rules for annotators.

For example, "more on-brand" can be decomposed into: avoid over-colloquial phrasing, maintain consistent forms of address, avoid exaggerated promises, keep a fixed response structure. "More like a professional consultant" can be decomposed into: state the basis for judgment, expose uncertainty, give conditional constraints, avoid making baseless decisions for the user. "Robust but not mechanical" must be further split into the two dimensions of boundary statement and expression naturalness, with explicit priority under conflict.

In other words, before business preferences enter training, they must undergo a "data-ization translation." Who does this translation, how it is done, and how annotators understand and execute it determine whether the preference data is usable. Training methods are only the consumer side; what actually converts business goals into supervisory structure is the data design itself.

Figure 13-1: Pipeline from preference data to reward signals

13.2 Preference Pairs, Scalar Rewards, and Process Rewards¶

Definitions of pairwise preference, scalar score, and process reward¶

Preference learning is often summarized as "giving the model feedback," but from a data engineering perspective, that statement is too vague. When feedback enters training, it must exist in a clearly defined data structure, and different training methods accept different feedback forms. For data teams, the first question is not "do we collect human feedback," but "in what form do we express feedback." In current mainstream practice, the three core forms are preference pairs, scalar rewards, and process rewards.

Pairwise preference is the most classic and currently most common form. The idea is: under the same input, generate two or more candidate outputs, then have a human, a model, or a rule system judge which is better. The typical data structure is:

(x, y_w, y_l)

where x is the input, y_w is the winning answer, and y_l is the losing answer. "Winning" does not mean absolutely correct; it means more preferred along the current comparison dimensions and in the current task context. Pairwise preference's biggest advantage is that it avoids the scale-inconsistency problems of absolute scoring. Annotators may not stably judge whether an answer is worth 3 or 4 points, but it is usually easier to judge which of two candidates is more worth keeping. For this reason, preference pairs are often viewed as a low-barrier, high-robustness data interface.

Scalar reward assigns a numerical score to a single output. It can be expressed as:

(x, y, r)

where r may be a discrete rating or a continuous value. Scalar rewards are intuitive and particularly suitable for training a Reward Model (RM), since an RM's goal is exactly to learn a function that maps inputs and outputs to real-valued scores. Scalar rewards have another advantage from a unified-interface perspective: they make downstream analysis, ranking, threshold cutoffs, and cross-system sharing easier. However, they more easily introduce scoring drift and scale bias. Different annotators may not interpret "4 points" and "5 points" the same way, and the density of high scores may differ across task pools. Scalar rewards therefore tend to require more governance effort in collection, calibration, and normalization.

Process reward targets a different class of problem: in some tasks, value lies not only in the final answer but in the path the model takes to reach it. For these tasks, scoring only the final result is often insufficient, because errors can occur in intermediate steps, and intermediate errors are sometimes masked by a superficially correct final answer. Process reward can be abstracted as:

(x, {s_1, s_2, ..., s_t}, {r_1, r_2, ..., r_t})

where s_i is an intermediate step, reasoning fragment, tool call action, or local decision state, and r_i is the local reward for that step. Process rewards are most suitable for long-chain reasoning, multi-tool calling, complex execution planning, code generation, and agent workflows — tasks whose success depends not only on the terminal state but heavily on intermediate behavior quality.

From a data-system view, these three signals differ not just in storage format but in the function they serve when expressing preference. Pairwise preference is best at expressing relative ranking; scalar reward is suited to forming a unified scoring layer; process reward pulls feedback from the terminal back into the middle. Teams should choose the interface that fits the task's nature, annotation capability, and training goals — not treat "reward" as a single concept.

Further, these three correspond to three different problem framings. Pairwise preference answers "which of these two outputs should be kept"; scalar reward answers "roughly what quality level is this output"; process reward answers "how was this output produced, and is that path itself worth encouraging." If the team has not even distinguished these problem framings, a common pitfall arises: they want to solve a complex long-chain behavioral problem, but only collect overall-satisfaction-style labels — and the training target simply does not match the task structure.

Code example: Minimum data formats (JSONL) for the three signal types

For the same task input x, you can record supervision signals via different "feedback interfaces." Below are three minimum usable structures for data pipelines and annotation platforms.

{"type":"pairwise","prompt":"User: Please explain in three sentences what DPO is.","winner":"(a more concise answer covering key points)","loser":"(a more verbose, off-topic answer)","meta":{"task":"explain","dims":["helpful","concise"],"source":"human"}}

{"type":"scalar","prompt":"User: Summarize the following policy text...","response":"(candidate answer)","score":4,"meta":{"task":"summary","rubric":"v1","rater":"r_1027"}}

{"type":"process","prompt":"User: Break this problem into three steps and complete retrieval.","steps":[

{"state":"Formulate retrieval question","output":"(step1 text)","reward":1},

{"state":"Choose tool and parameters","output":"(step2 tool params)","reward":0},

{"state":"Integrate evidence and answer","output":"(step3 final answer)","reward":1}

],"meta":{"task":"rag_agent","unit":"step"}}

What tasks each of the three signal types suits¶

In terms of task type, pairwise preference, scalar reward, and process reward are not absolutely better or worse — they are three interfaces suited to different problem layers.

Pairwise preference is especially suited to open-ended generation, style tuning, and tasks where relative trade-offs are clear. Examples include question answering, summary rewriting, email rewriting, refusal template tuning, customer-service phrasing selection, and brand voice unification. In these tasks, what the team really cares about is not "what score is this answer worth" but "among several acceptable answers, which do we prefer to keep." Pairwise preference is the most natural way to express relative quality.

Scalar reward is more suitable for scenarios requiring unified ranking and cross-system sharing. For example, when a team wants a unified candidate scorer to rank outputs across model versions, coarse-filter feedback samples returning from production, quality-control automatically generated data, or share a common reward interface across tasks — scalar rewards become more valuable. Their advantage lies less in initial annotation than in downstream platform-level reuse.

Process reward suits tasks where "the result being correct isn't enough — the path must also be correct." For example, complex reasoning, RAG retrieval planning, agent tool calls, code repair, multi-step form filling, and workflow orchestration. The key issue in these tasks is not just whether the final output is usable, but whether the intermediate steps are stable, interpretable, transferable, and auditable. Rewarding only the terminal state in such cases may hide real risk.

Data requirements for direct preference optimization and reward modeling¶

When preference data actually enters training, the two most common routes are Direct Preference Optimization (DPO) and Reward Modeling (RM). Both rely on preference data, but their data quality, format, and governance requirements differ. Many teams take detours in preference learning largely because they have not first decided: what kind of data does our training method consume?

DPO's strength is path directness. It typically does not require training a separate explicit reward model; it directly uses preference pairs to increase the relative probability of winning answers over losing ones during parameter updates. In effect, DPO turns "we prefer A over B" directly into a training objective. So for DPO, the key data asset is high-quality preference pairs. What matters is not "how many points each answer is worth" but the reliability of the comparison. As long as win/lose relations are stable, candidate quality has discriminative range, and annotation rules are consistent, DPO usually works well.

This means that for DPO, data teams should focus on candidate generation mechanisms, hard-case coverage, win/lose consistency, and clarity of comparison standards. If the gap between candidates is always too large, DPO only learns to distinguish "obvious good from bad" and cannot adapt to the subtle but important style differences in production. If the gap is too small but specifications are vague, annotator disagreement increases and the training signal weakens. DPO seems to save the step of training a reward model, but it actually demands very high quality in pair construction.

The RM route, in contrast, aims to first learn a "scorer." It wants the model not only to judge between A and B, but to give a relatively stable quality estimate for a single output. These methods place higher demands on data, because they require the signal to be cross-sample comparable to some degree. Even if the starting data is preference pairs, the team must think about how those relative comparisons can constrain a stable scoring function. If scalar rewards are used directly, the team must address scoring-scale inconsistency, task-distribution imbalance, and temporal drift.

The benefit of RM is that once a reliable reward model is trained, it can be reused across many stages: candidate ranking, offline evaluation, policy optimization, online monitoring, model-as-judge assistance, etc. From a platform-building perspective, RM acts like a unified "reward interface." But for the same reason, its data requirements are stricter. Teams must care not only who wins but also why, by how much, whether scores are comparable across scenarios, and how dimension conflicts aggregate.

In engineering practice, if the team's strongest asset is a large pool of preference pairs and the goal is to quickly pull model behavior toward a better style, DPO is usually the more natural entry. If the team wants to build long-term reusable reward infrastructure, sharing a unified reward representation across models, training stages, and evaluation pipelines, RM offers higher value. Either way, the real prerequisite is not "collect more feedback" but solidifying the foundational data work: defining preferences, candidate generation, scoring dimensions, noise governance, and version management.

DPO relies more on "comparison quality"; RM relies more on "scale stability"¶

From a data-governance angle, the difference between DPO and RM can be stated bluntly: DPO fears unstable comparison relations more; RM fears unstable scoring scales more.

For DPO, as long as the win/lose relations among candidates under the same input are clear enough, many issues are tractable. It doesn't really care whether an answer is worth 8 or 9 points; it cares whether, between A and B, the annotation system can consistently pick the better one. The main risk for DPO is poor candidate construction, imbalanced difficulty distribution, vague win/lose criteria, and inconsistent annotator standards.

RM, having to learn a cross-sample generalizable scoring function, faces another problem: whether scores from different samples, times, and annotators can be treated as on the same scale. If not, the reward model learns not a quality function but a noise function mixing personnel, temporal, and task-distribution differences. So RM places higher demands on calibration, standardization, source labeling, and stratified modeling.

This is why many teams start with DPO via preference pairs to get a quick win, and gradually build a more complex RM system. The former solves "pull direction right first"; the latter solves "establish unified reward infrastructure." They are not mutually exclusive but often correspond to different maturity stages.

Why candidate construction is a prerequisite for reward signal quality¶

Whether using DPO or RM, an often underrated issue is candidate construction. Many teams focus on "is the annotation accurate" and overlook the crucial upstream step: how are candidate answers generated in the first place? If the candidate pool's quality is imbalanced, even strict annotation can only learn low-resolution preferences.

For example, if candidate A is obviously better than B, annotation is easy, but such samples only teach the model to distinguish coarse-grained good/bad. If A and B are extremely close and the specification has not clarified the priority of conflicting dimensions, high-disagreement samples increase and the training signal weakens. The more ideal case is: candidates have real and business-meaningful differences, but those differences are not all on a single surface dimension obvious at a glance. Only such samples force the model to learn finer behavioral trade-offs.

Candidate construction is therefore an upstream core link of preference learning. Which models supply candidates, how differences are controlled, whether multiple styles are covered, whether boundary conflicts are included, whether you deliberately collect "all seem fine but the organization prefers one" samples — these directly determine how much information the downstream reward signal carries.

The difference between final-result rewards and process rewards¶

When teams first encounter preference learning, they often default to judging only the final output: is this answer overall good, is it worth encouraging? This is a reasonable starting point and natural for many basic tasks. But as task complexity rises, teams quickly find that looking only at the final result often hides the truly important problems. What makes a model dangerous is often not that the final text is blatantly wrong, but that it reached that result through an unstable, uninterpretable, non-transferable process.

The so-called final-result reward essentially compresses the whole generation process into one terminal evaluation. It best suits tasks whose terminal state's value can be clearly judged: whether a summary covers the key points, whether classification is correct, whether a customer-service reply is polite and compliant, whether a refusal hits the mark. Here, the result is the main carrier of value, the intermediate path is not central, and terminal-only reward is usually enough.

In many complex tasks, however, terminal-only evaluation suffers two typical problems. First, the "lucky guess" problem: through unreliable reasoning, step skipping, wrong tool calls, or sheer chance, the model arrives at a correct result. Rewarding only the terminal state does not teach it reliable methodology; it reinforces the habit "as long as it's right at the end, it's fine." Second, the "surface-acceptable" problem: the final output looks satisfactory, but the intermediate process violated important principles — necessary retrieval was skipped, evidence verification was omitted, tool-call order was inefficient, the model was overconfident at key nodes, or it relied on intermediate assumptions that should not have been exposed. Terminal-only reward masks all these risks.

The point of process reward is to push the supervisory signal forward so the model is responsible not only for "what is achieved" but also for "how it is achieved." For reasoning tasks, process reward can encourage complete logical steps, clear causal relations, and timely correction of erroneous intermediate conclusions. For tool-call tasks, it can encourage retrieve-then-answer, verify-then-execute, and complete-parameters-before-calling. For code generation or workflow orchestration tasks, it can constrain intermediate design quality rather than only checking final test pass.

Process reward is not free. It substantially raises data-construction difficulty, because the team must first define what a "process unit" is: a sentence, a reasoning step, a tool call, a planning node, or a subtask completion state? Then it must define what counts as "good process" and "bad process" and design executable annotation specifications. Process reward pushes preference learning from "answer-comparison engineering" into "behavior-decomposition engineering." But once a team enters long-chain tasks, high-value tasks, or high-risk automation scenarios, this step is usually inevitable.

Why process reward is more like "behavior supervision" than just "result supervision"¶

Terminal rewards focus on what the model delivers; process rewards focus on how the model produces those deliveries. In that sense, process reward is closer to supervising behavior itself rather than merely scoring results. It tries to answer not just "is the final answer correct," but "did the model take acceptable, interpretable, reusable, transferable actions to reach the answer." This gives process reward a strong behavior-constraint flavor and brings it closer to the real-world need to manage and govern agents.

This difference is especially crucial in complex systems. In many automated scenarios, system risk does not lie in the output string itself but in the path that produced the result. For instance, an agent finally completes the task, but along the way it performed redundant retrieval, erroneous parameter calls, evidence-free inferences, or risky step-skipping — they just happened not to surface in the final output. Looking only at the terminal state lets such bad behavior pass; over time, the model increasingly prefers "lucky-but-easy" paths. For training systems, this path dependence is often more dangerous than any single wrong answer, because it means the model has formed an unsound preference at the policy level: as long as there's a chance of getting it right, there's no incentive to build a more regulated, more reliable execution process.

From a training-signal angle, terminal rewards are like "after-the-fact acceptance": after the task is done, the system gives a holistic evaluation based on the final answer or final state. Useful, but if the task is long-chain, multi-stage, and strongly state-dependent, a single terminal signal is too sparse. What the model did right or wrong in the first dozen steps is not finely distinguished by the terminal reward — it compresses the trajectory into one result label and back-propagates that label across the chain. As a result, good local decisions may be punished by a bad finale, and risky local moves that happened to succeed are reinforced.

Process reward addresses exactly this credit-assignment distortion. It unfolds supervision from the task's end to intermediate states, allowing the system to express preferences at key trajectory nodes. Is a retrieval action necessary? Is a tool call compliant? Is an intermediate conclusion supported by evidence? Did a task switch lose state? Behaviors that terminal rewards mask can be explicitly evaluated under process reward. Reward becomes not just "did you succeed" but "in what way did you succeed (or fail)." This directly changes what the model learns: not just an output distribution, but a behavior distribution.

The real significance of process reward, therefore, is not just to make each step prettier — it is to put "acceptable behavioral paths" themselves under supervision. It pushes preference learning from "answer optimization" to "policy optimization." Under result-supervision, the model gradually learns "what kinds of answers tend to score high"; under process supervision, it must also learn "what kinds of action sequences are permitted, encouraged, and worth repeating." The former is closer to shaping outputs; the latter is closer to shaping the decision mechanism itself.

This distinction is especially pronounced in agents, tool calls, multi-hop retrieval, and code execution, where tasks are not single-step text generation but continuous interaction with external environments and ongoing choices. Errors do not always show up immediately in the final text; they often appear first as behavioral deviations: skipping verification when it was needed, failing to stop when stopping was appropriate, using a high-privilege interface when a safe interface should be called, advancing a workflow without confirmation. If the training system cannot give negative feedback for such behaviors, the model will form a "outcome-over-process" execution habit. Once the task environment grows more complex, feedback delays lengthen, and the margin for error shrinks, this habit quickly becomes systemic fragility.

Furthermore, process reward serves to "make implicit norms explicit." In many deployment scenarios, what we really care about is not only result correctness but whether the process complies with organizational, regulatory, risk, and operational boundaries. In financial QA, was a verifiable basis cited? In medical assistance, was uncertainty preserved? In an enterprise agent, were approval workflows and authority boundaries respected? With terminal-only reward, these norms can only exist as vague overall preferences that the model rarely learns stably; with process reward, they can be tied to specific behavioral nodes, so that "caution," "compliance," "evidence-backed," and "no-step-skipping" cease to be slogans and become scorable, comparable, optimizable intermediate features.

This does not mean process reward replaces terminal reward. Many tasks still require a final "did you achieve the goal" verdict. More accurately, process reward fills the blind spots of terminal reward. Terminal reward tells the model "what results are worth pursuing"; process reward tells the model "what paths are trustworthy." Together, they let the training system optimize both result effectiveness and process reliability. Without process reward, a result-chasing model may perform well on static evals while repeatedly showing rough execution, risky behavior, and unstable policies in real deployments.

From an engineering perspective, process reward matters because it brings training targets closer to deployment targets. Real systems never accept only the final output; they equally care whether intermediate behavior is safe, robust, economical, and explainable. No enterprise ignores the fact that an automated system performed unauthorized operations, redundant calls, erroneous reads/writes, or unfounded decisions just because it "finally got the job done." Likewise, a high-quality training system cannot only reward "happens-to-be-correct"; it should progressively encourage reproducible, auditable, generalizable behaviors. In that sense, process reward is "behavior supervision," not merely a finer-grained version of "result supervision."

Defining the "process unit" is the first hard problem in process reward design¶

Process reward sounds natural conceptually, but the moment it hits data construction, the first core problem appears: what counts as one step? Across tasks, how you divide "process units" significantly affects annotation quality and reward stability.

In reasoning tasks, a process unit might be one reasoning statement, one logical jump, or one intermediate conclusion. In tool-call tasks, it might be one retrieval action, one parameter fill, or one API call. In multi-step execution, it might be a plan node, a subtask completion state, or one state transition. Different definitions yield very different supervision granularities for the same task chain.

If granularity is too coarse, process reward degenerates into a near-approximation of terminal reward; if too fine, annotation costs explode and consistency is harder to maintain. So process reward is not simply "label a few more middle steps" — it requires first establishing a stable behavior-segmentation framework. Without that, process supervision easily becomes a high-cost, low-stability data project.

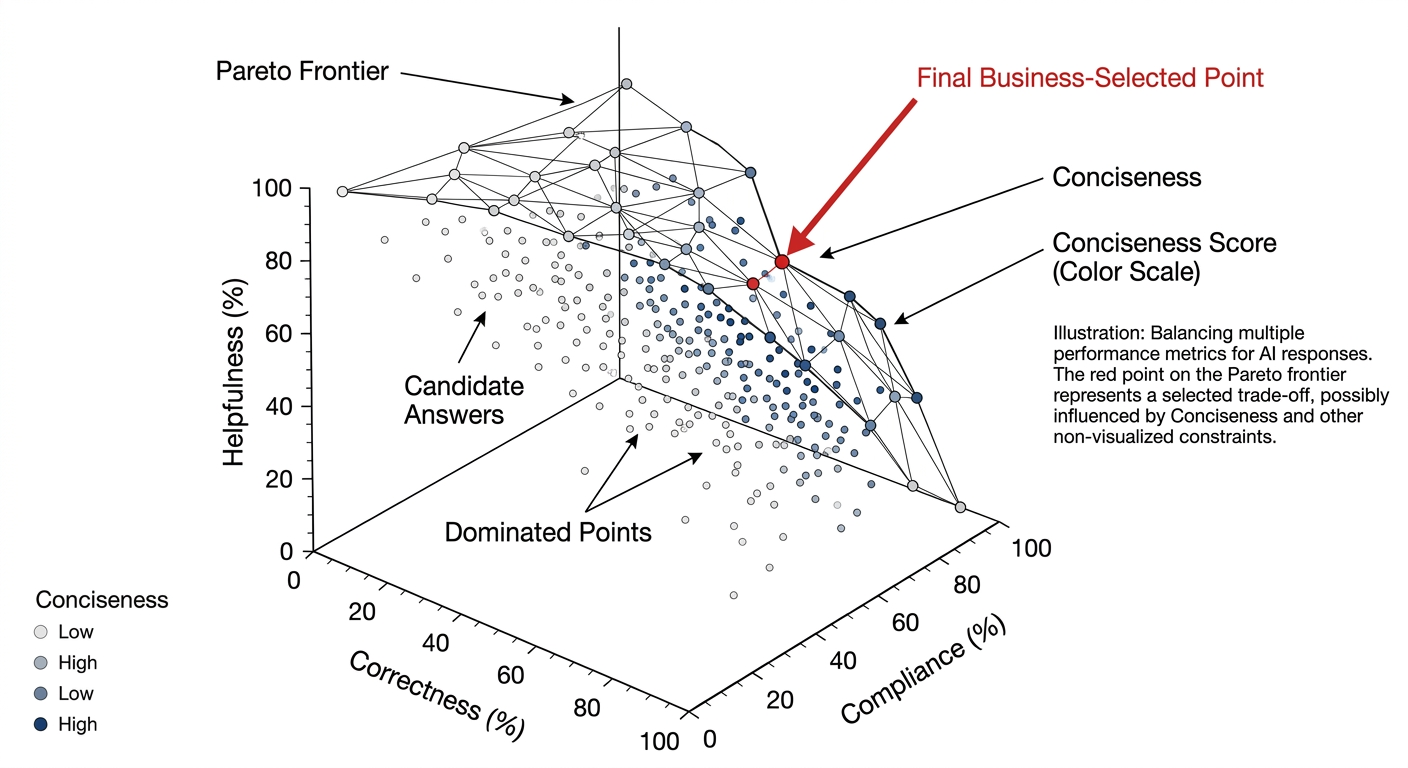

Multi-objective preference, process reward, and Pareto trade-offs¶

In real production systems, preferences are almost never single-objective. A model may simultaneously be required to be correct, helpful, bounded, concise, stable, on-brand, low-latency, low-hallucination, and avoid over-refusal — while providing enough interpretability in certain scenarios. The problem is that these goals do not always grow in lockstep. Often, strengthening one dimension naturally sacrifices another. What makes preference learning truly hard is precisely this multi-objective structure.

If a team still operates on the "total score" mindset, two problems emerge. First, multiple objectives are crudely summed, so the team doesn't know why the model improved or where it got worse. Second, conflicts in the training set are averaged out, the model cannot learn clear trade-off strategies, and it forms unstable behavior under blurred signals. Introducing multi-objective preference in preference data design is therefore not just refinement — it is necessary for handling real business conflicts.

Multi-objective preference means the team no longer just records "which candidate is overall better"; it preserves judgment information across multiple evaluation dimensions. For instance, in an enterprise Q&A scenario, candidate A may excel on helpfulness and naturalness but be weaker on boundary clarity; candidate B may be more robust but slightly stiffer. A single overall win label hides the conflict; recording judgments per dimension provides higher resolution for downstream aggregation, reward model training, and problem analysis.

This is where the concept of Pareto trade-off becomes unavoidable in preference learning. For multi-objective systems, no single "absolute optimum" exists — only a set of non-dominated solutions across objectives, i.e., the Pareto frontier. Answers on the frontier mean improving one objective further requires sacrificing another. The data team's task is not to eliminate this frontier but to be explicit about where the organization wants to sit on it. The team must formally write into preference specifications and data interfaces what scenarios warrant sacrificing naturalness for robustness, what tasks warrant sacrificing some conciseness for fuller explanation, and so on.

Process reward and multi-objective preference are naturally coupled. Many objectives are not embodied only in the terminal state but in the process. "Evidence before conclusion" is a process preference. "State limitations before giving advice in high-risk situations" is a process preference. "Parameter validation before tool invocation" is a process preference. If the team only does multi-dimensional scoring at the final-answer level and does not record local merits in the process, supporting Pareto trade-offs for complex systems is hard. Many key objectives are not in the final sentence — they hide along the generation path.

So in terms of preference-learning maturity, teams usually pass through a gradual upgrade: start from overall preference pairs to solve "who is overall better"; introduce multi-dimensional evaluation to solve "why better"; then add process reward in complex tasks to solve "how to get better in the right way"; finally form a multi-objective, layered, interpretable reward-signal system. This is the key signal that preference learning has moved from concept to engineering.

Code example: "Dimensionalized recording" of multi-objective preference annotation

When you don't want a "total score" to hide conflict, preserve dimension labels and aggregation policies in the data (aggregation can be decided at training/sampling time rather than locked in at annotation).

{

"type": "pairwise_multi_dim",

"prompt": "User: I've had some chest tightness recently — should I take medication?",

"candidates": {

"A": "(more natural, but lacks risk triage and medical-help prompts)",

"B": "(provides risk prompt and information clarification first, then general advice)"

},

"judgement": {

"overall_winner": "B",

"dims": {

"safety_boundary": "B",

"helpfulness": "B",

"naturalness": "A",

"conciseness": "A"

},

"reason_tags": ["High-risk: triage first", "Insufficient info: clarify first"]

},

"meta": {"scenario":"medical","risk_level":"high","policy":"medical_v2"}

}

Multi-objective preference cannot be hidden behind a "total score"¶

When designing preference data, many teams want to compress all dimensions into one overall score, thinking it's cleaner and easier to train on. But once goals are genuinely multi-dimensional, a total score inevitably loses key information. At best it tells you "overall it seems better," not whether helpfulness rose, compliance dropped, or conciseness improved at the expense of explanation.

The biggest temptation of a total score is that it looks clean. Each sample is reduced to "which is better" and "by how much," easy to feed into training and easy to report, and version comparisons can even produce a nicely rising curve. But that is precisely the problem: once all differences are compressed into one number, the team increasingly cannot see what is happening behind it. The model may indeed be "more like a high-score answer," but whether that high score reflects more helpfulness or just more long-form completeness; whether it reflects safety or learning lots of vague hedging to dodge risk; whether it reflects brand voice or trading efficiency for softer phrasing — all of this can be smoothed out together.

Such information loss may be tolerable early on, but once the system enters fine-grained optimization, it quickly becomes a bottleneck. The team cannot tell which kind of change the training signal is driving, nor whether a given version update pushed the system to the wrong trade-off point. That is why multi-objective preference data often needs to retain dimension labels, per-dimension scores, or at least preference rationale rather than only a crude final win/lose.

In real engineering, many issues are not "this answer is absolutely better" but "it is better on one dimension and worse on another." In a customer-service scenario, an answer may be warmer and more complete but more verbose, so the next-step action gets buried. In a legal scenario, an answer may be clearer in expression but overcommit under uncertain conditions. In a medical scenario, an answer may be more restrained and safer, yet users feel it's information-thin and doesn't address their main concern. These are not simple better/worse relations but real tugs-of-war among multiple objectives. With only a total score, the team sees "this answer won" without knowing on what grounds, and at what cost.

This is also why many systems, in later optimization phases, exhibit a puzzling pattern: subjective production sentiment changes and user feedback changes, but offline total scores show no obvious issue. The model may have become safer but more boilerplate; more concise but stripped of necessary explanation; more brand-aligned but evasive on complex questions. With only a total score, such changes get averaged away or are misinterpreted as "roughly the same." But users do not feel an average — they feel specifically which experience deteriorated and which boundary loosened.

There is a more insidious problem with total scores: they force the team to make many value judgments prematurely that should not have been crudely merged. When helpfulness and compliance conflict, which matters more? When conciseness and explanation tug at each other, which wins? When warmth and professionalism can't both be maxed out, what should the system protect? Folding all this into a single score looks like making training more direct, but it quietly performs a complex trade-off at the data layer — opaque and unstable, varying across annotators, business teams, and stages. The number is the same, but what it represents has shifted.

Worse, once you only look at the total score, the team cannot consciously manage trade-offs. Suppose the current top priority is tightening boundaries in high-risk scenarios without obviously harming helpfulness. If the data only records final win/lose, after training, even if the model has indeed become more conservative, the team has no way to confirm whether it also became vague on questions that should have been clearly answered. Conversely, if a version pursued more natural, human-like phrasing and the model started oversharing in contexts that should be restrained, total scores may not immediately expose it. Total scores only tell you the overall lean — not whether that lean comes at the cost of a critical dimension.

So what multi-objective preference data must really preserve is not just the result but the structure behind it. At minimum, the team should know what each preference judgment was based on: accuracy, conciseness, safety, or tone/format alignment. Only with that information can downstream training, analysis, and regression have something to grip. Otherwise, every time the model changes, the team can only guess: where was it pushed too far, and where not far enough.

In many cases, retaining dimension information does not require an overly complex system. The point isn't the number of tags but ensuring critical conflicts are not flattened. At minimum, distinguish the high-frequency tug-of-war dimensions like helpfulness, compliance, conciseness, and explanatory sufficiency; or require annotators to note "why A over B" so downstream analysis can return to the original rationale. The goal isn't a richer spreadsheet but giving the team visibility into what they're actually changing across version iterations. A total score can serve as a summary view but cannot replace the underlying structure.

In the end, the hardest part of multi-objective preference learning has never been stacking many dimensions together — it is acknowledging that they will not always move in the same direction. Sometimes safer means more conservative; more concise means one less layer of explanation; warmer doesn't equal most efficient. What system optimization really needs is not pretending the conflicts don't exist, but preserving them, seeing them, and consciously deciding where to lean. If you flatten all tensions with one total score, training certainly becomes simpler, but you also lose, faster, the ability to explain how the system's behavior is changing. By then, the score is still climbing but the model has been drifting away from where you wanted to go.

What Pareto trade-offs mean for the data team¶

Pareto trade-off is not just a term from optimization theory. For the data team, it means a very practical issue: you cannot push every objective to the limit simultaneously, so you must be clear about which sacrifices are acceptable and which are not.

For example, in high-risk Q&A, the team may sacrifice some naturalness for clearer boundaries; in marketing copy, it may accept some stylistic tension for sharper brand expression; in an enterprise assistant, auditability may matter more than companionship. None of these is decided by the training algorithm alone — they must first be expressed by the data team through preference specifications and sample design.

So the real meaning of Pareto trade-off in preference learning is not drawing a pretty frontier — it is forcing the team to turn "where do we want to push the system" from verbal preference into explicit data choices.

Evolution from single preference to layered reward systems¶

From an engineering-maturity perspective, preference learning rarely jumps straight into multi-objective, process-level, high-resolution mode. The more common path is: first build a single preference-pair system so the model learns basic ranking; then progressively introduce dimensional evaluation so the system knows not only who is better but why; then add process reward in high-value complex tasks so the system learns "to get better in the right way"; finally, through layered training and version governance, integrate these signal types into long-term maintainable reward infrastructure.

This evolutionary path matters because it shows preference learning is not a static data format but a gradually upgrading engineering capability. The team doesn't need to build everything complex at the start, but must be clear about where it's heading. Otherwise, early-stage data systems become bottlenecks later, simplified to the point of obstruction.

Figure 13-2: Multi-objective preference trade-offs

13.3 Preference Sources and Supervision Modes¶

Expert annotation, ordinary user feedback, model-vs-model evaluation, rule-based judging¶

Where preference signals come from is one of the most fundamental questions in preference-learning design, because the source determines not only collection cost and scale but also whose value judgment the reward system ultimately reflects. In practice, preference data sources usually fall into four categories: expert annotation, ordinary user feedback, model-vs-model evaluation, and rule-based judging. Each has its value and clear limits. For teams that need to actually deploy, the question is never "which one is the most advanced" — it is understanding what role each can and cannot play.

Expert annotation suits tasks with high knowledge barriers, high risk, and judgment standards heavily dependent on professional training — medical advice, legal interpretation, financial compliance, complex enterprise process Q&A. In such tasks, many "looks-correct" answers hide serious problems that ordinary users or general annotators struggle to spot. The biggest value of experts is not only judging which candidate is better but also articulating why, making implicit professional standards explicit. For data teams, expert annotation usually plays a "calibration" role — establishing a high-confidence benchmark for a task class. Its drawbacks are also obvious: expensive, slow, limited coverage, and even differences and theoretical disagreements among experts. Expert signals are precious but cannot single-handedly support a large-scale preference system.

Ordinary user feedback is complementary to experts. It is closest to real product use and can reflect end-user experience at scale: is the answer convenient, did it really help complete the task, is the tone comfortable, is the explanation adequate. Many issues of helpfulness, readability, and interaction smoothness are more sensitively detected by users than by experts. But user feedback has its own pronounced problem: it is a heavily mixed signal. A user may like an answer because it's more natural, faster, or aligned with their personal stance — not because it's more correct, safer, or more compliant. So user feedback is better suited as an experience-layer preference, and should not be the sole supervision source in high-risk tasks.

Model-vs-model evaluation is a common method for scaling preference data in recent years. It typically generates candidates from multiple models, then has another model act as a judge to compare, score, or explain candidates. Its advantage is greatly reducing cold-start cost, suitable for initial filtering, expanding long-tail coverage, constructing hard-comparison samples, and prioritizing human-annotation queues. The most valuable use of model judging is not "replacing humans" but improving production efficiency. But its flaw is obvious: a model judge inherits its own training biases, may systematically favor certain phrasings, language styles, or structured templates, and may be more lenient toward expressions it's familiar with — amplifying homogeneity bias. So model-vs-model evaluation usually fits as auxiliary supervision, not as the final arbiter in high-risk scenarios.

Rule-based judging strips off the programmable portion of judgment from subjective preference. It suits format correctness, mandatory-field completeness, sensitive-word and forbidden-content detection, tool-call parameter validation, length-threshold control, and rule-violation detection. The advantages are high consistency, auditability, low cost, and great value in compliance and process constraints. But coverage is inherently limited — rules can only judge constraints that can be explicitly encoded; they cannot substitute for judging helpfulness, naturalness, or genuine correctness.

A mature preference system is therefore not single-source but layered. Experts calibrate high-risk, high-value tasks; ordinary users reflect real experience; model judging scales coverage and pre-ranks; rules handle hard boundary checks. The data team's real job is to define weights, applicable scenarios, and conflict-resolution mechanisms for each source — not abstractly say "we use human preference" or "we use AI feedback." Because "preference source" is itself part of reward-system design.

Mixing online preference, offline preference, and synthetic preference¶

Besides classifying by source, preference data can also be classified by timing and construction into offline preference, online preference, and synthetic preference. These coexist in a mature system, each playing different lifecycle roles.

Offline preference is usually the starting point of preference learning. The team builds a batch of input tasks, has one or several candidate models generate multiple output versions, and then organizes human comparison, scoring, or process review. Offline preference's advantage is controllability: tasks can be carefully sampled, candidates can be designed with deliberate difficulty distributions, the annotation environment is stable, QA processes are clear, and golden sets and initial annotation specifications are easy to establish. In the early stages of preference learning, offline preference is essentially irreplaceable, because the team must first learn "how to define preference" in a clean, analyzable, reviewable environment.

Online preference comes from real interaction behavior post-launch: likes, dislikes, acceptance, retries, follow-up questions, copy-answer, transfer-to-human, abandonment, and so on. It reflects the model's behavior in the real distribution and can fastest expose drift in new tasks, new user populations, or new business scenarios. The biggest value of online preference is freshness and timeliness — letting the data team see real feedback structures beyond the offline set. But the noise is also greatest: not all users feed back, and those who do are not representative of the average; many behavioral signals are weak labels not directly mapping to quality judgments; complications include UI, interaction pacing, and task context. So online preference is usually suited for trend monitoring, sample backflow, candidate-pool expansion, and online correction — not for direct use as high-confidence training labels.

Synthetic preference is an expansion method between manual and automatic. It may come from model-judge-generated comparisons, rule-derived signals, log re-composition, automatically constructed hard pairs, or pairs derived from existing scalar scores. Synthetic preference greatly boosts data-production efficiency, especially for forming an initial preference corpus during cold start or scaling coverage along low-risk dimensions. But its boundary must be clear: it is "usable but use with care" auxiliary signal, not naturally equivalent to high-quality human preference. Especially in high-risk scenarios, synthetic preference should not replace human and expert calibration; it should only supplement with explicit confidence levels.

A truly robust preference data system usually adopts a "offline as base, online as correction, synthetic for expansion" hybrid. Offline data provides a structurally clear baseline; online data offers fresh distributional correction; synthetic data brings scale and coverage. The data team's job is not to pick one of three but to define their cooperation: which tasks are sampled offline, which online signals can be backflowed into the offline annotation pool, which synthetic signals are used only for candidate filtering rather than feeding directly into main training, which sources can serve as weak supervision but not golden. Only then does the hybrid preference system avoid becoming a confused, low-confidence, hard-to-explain hodgepodge.

Preference annotation workflow design for high-risk scenarios¶

In high-risk scenarios, preference annotation differs entirely from ordinary conversational scenarios. The point is not "which answer is more likable" but "which answer is more acceptable in terms of accountability, professionalism, boundary control, and risk exposure." So high-risk preference data cannot use lightweight annotation thinking — it must be designed as an auditable, traceable, reviewable workflow system.

First, the definition of preference in high-risk tasks must be front-loaded, not left to annotators' ad-hoc judgment. Before annotation begins, the team must decompose the task into actionable dimensions — factual correctness, scope of authority, presence of limiting conditions, whether high-risk advice is given, whether necessary cautions are omitted, whether the answer should be a refusal or a handoff. These must enter not only the annotation spec but, where necessary, the annotation UI and sample metadata. Otherwise, the annotation devolves into "pick a more decent-looking answer by feel," which has almost no governance value in high-risk systems.

Second, candidate generation should already include rule-based pre-filtering. Candidates that clearly violate rules, miss critical information, are obviously incomplete, or fail the minimum business format should not enter the expensive expert-comparison stage. This saves annotation cost and, before training, also establishes a baseline filter so the high-risk sample pool isn't polluted by low-quality noise.